London and Boston, 23rd February 2026 – Snap Analytics and Dataiku today announced a strategic partnership designed to help organisations turn artificial intelligence into a reliable, everyday business capability. The partnership brings together Dataiku’s enterprise AI platform with Snap’s delivery and operating model, giving companies a clear path from experimentation to production-grade AI.

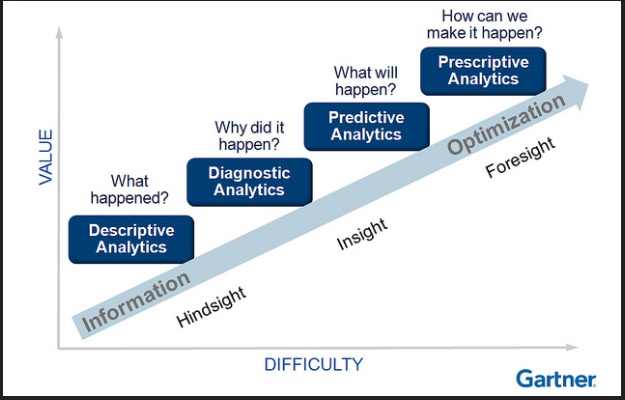

Across every industry, interest in generative AI has surged. Leaders want systems that can predict outcomes, automate decisions and support teams in real time. Yet most organisations remain stuck in pilots. Their data is fragmented. Their models are difficult to govern. Their analytics tools were built for reporting, not for running AI at scale. As a result, promising use cases fail to move into the core of the business.

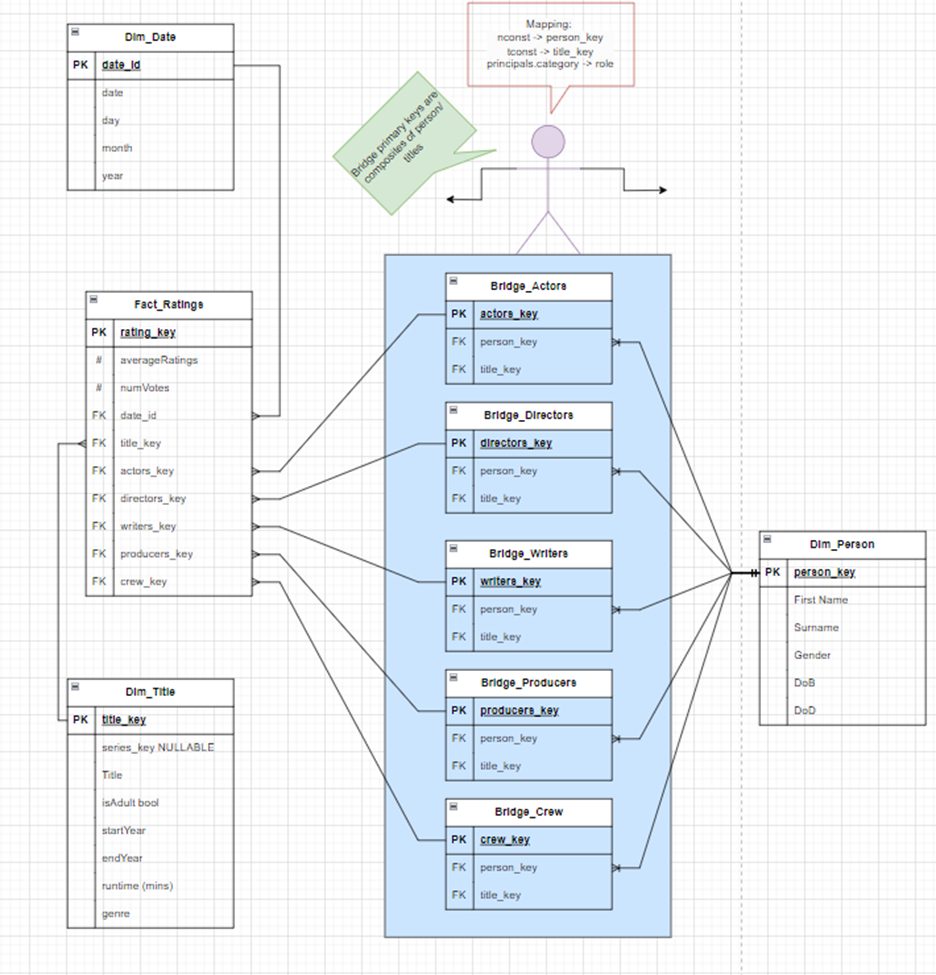

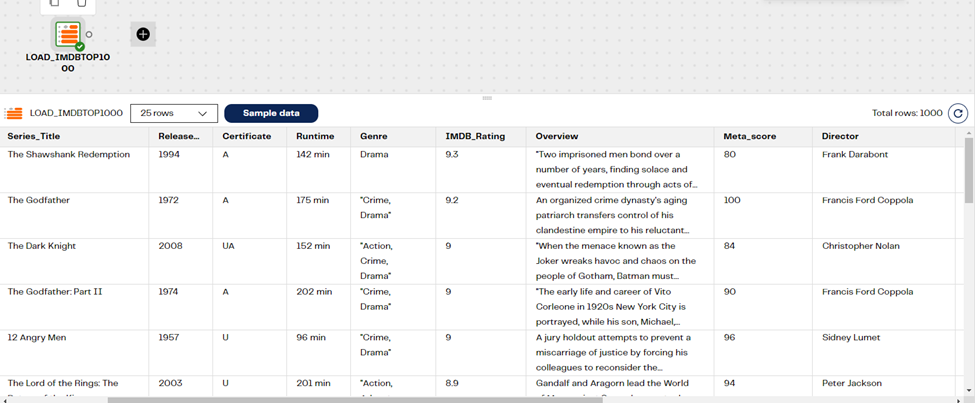

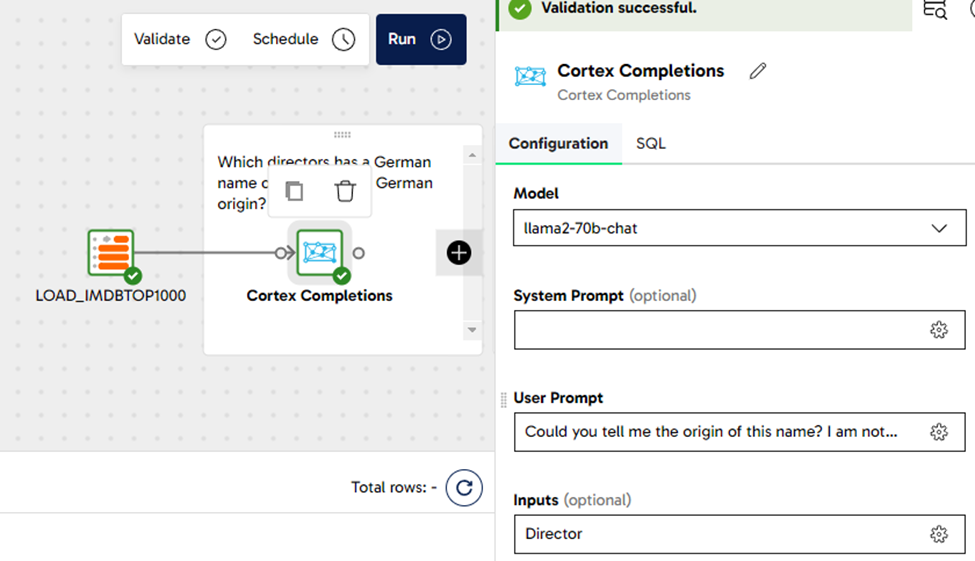

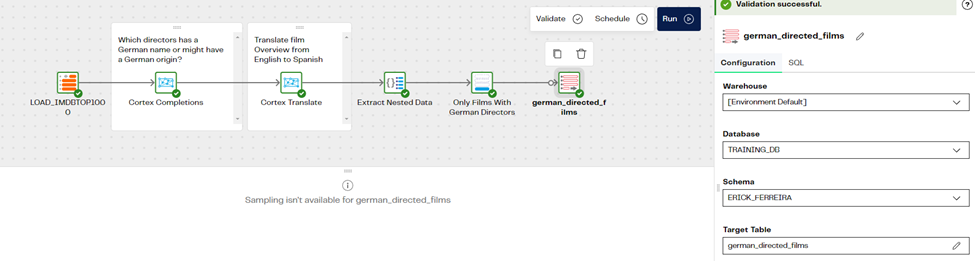

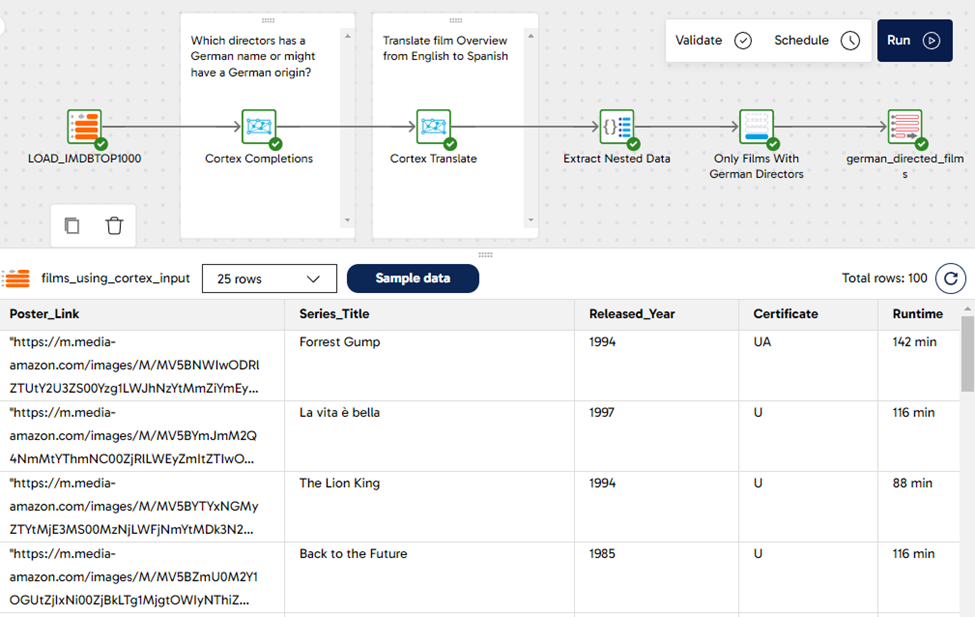

The Snap and Dataiku partnership is designed to close that gap. Dataiku provides a platform that allows organisations to build, deploy and govern AI models in one place. Snap provides the teams that connect data, design workflows, apply governance and ensure that AI continues to deliver value after it goes live. Together, they create a simple outcome: AI that works in the real world.

“Everyone is talking about AI, but very few organisations are using it to run their business,” said David Rice, Chief Executive Officer of Snap Analytics. “Dataiku gives organisations a powerful platform to build and manage AI. Snap makes it operational. Together we help clients move from experimentation to AI that delivers real results.”

As organisations adopt more advanced forms of AI, governance has become a growing concern. Boards and regulators expect transparency, traceability and control. At the same time, business leaders want faster decisions and better insight. The partnership addresses both. By combining Dataiku’s control layer for models with Snap’s delivery approach, organisations can scale AI without losing trust or oversight.

“Companies want AI they can trust and scale,” said Simon P., Partner Manager at Dataiku. “Snap brings the delivery model that makes Dataiku stick. This partnership gives customers a practical way to move from building models to running them as part of their everyday operations.”

The rise of generative AI has also exposed the limits of older analytics platforms. Tools that were built for manual workflows and batch reporting struggle to support the speed and complexity of modern AI. Many organisations are now looking to move away from fragile, legacy approaches and towards platforms that can manage data, models, and decisions together.

“Gen AI has raised expectations across the business,” said Calvin Fuss, Head of AI Practice at Snap Analytics. “Leaders want systems that can think, predict, and act. Dataiku provides the control and orchestration layer for AI. Snap provides the people and execution. Together we give organisations something far more powerful than traditional analytics tools.”

What sets the partnership apart is how AI is delivered. Snap embeds AI engineers and data specialists inside client teams, working alongside the business to build, deploy and maintain AI in production. This approach turns Dataiku from a software platform into a lasting enterprise capability, ensuring that AI continues to evolve as business needs change.

Snap Analytics and Dataiku believe this model represents the future of enterprise AI. Not as disconnected tools or short-lived pilots, but as a governed, embedded capability that supports everyday decision making and long-term growth.